CHAI Faculty Paper Accepted to ICML

07 Jun 2019

David Bourgin, Joshua Peterson, Daniel Reichman, Thomas Griffiths, and Stuart Russell submitted the paper Cognitive Model Priors for Predicting Human Decisions to the International Conference on Machine Learning 2019. The abstract can be found below:

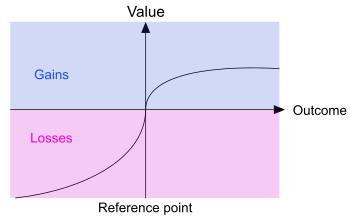

Human decision-making underlies all economic behavior. For the past four decades, human

decision-making under uncertainty has continued to be explained by theoretical models based on

prospect theory, a framework that was awarded the Nobel Prize in Economic Sciences. However,

theoretical models of this kind have developed slowly, and robust, high-precision predictive models of human decisions remain a challenge. While machine learning is a natural candidate for solving these problems, it is currently unclear to what

extent it can improve predictions obtained by current theories. We argue that this is mainly due to data scarcity, since noisy human behavior requires massive sample sizes to be accurately captured by off-the-shelf machine learning methods.

To solve this problem, what is needed are machine learning models with appropriate inductive biases for capturing human behavior, and larger datasets. We offer two contributions towards this end: first, we construct “cognitive model priors”

by pretraining neural networks with synthetic data generated by cognitive models (i.e., theoretical

models developed by cognitive psychologists).

We find that fine-tuning these networks on small datasets of real human decisions results in unprecedented state-of-the-art improvements on two benchmark datasets. Second, we present the first

large-scale dataset for human decision-making containing over 240,000 human judgments across over 13,000 decision problems. This dataset reveals the circumstances where cognitive model priors are useful, and provides a new standard

for benchmarking prediction of human decisions under uncertainty.